Deploying F5 BIG-IP LTM VE within GNS3 (part-1)

September 9, 2016 21 Comments

One of the advantages of deploying VMware (or VirtualBox) machines inside GNS3, is the available rich networking infrastructure environment. No need to hassle yourself about interface types, vmnet or private? Shared or ad-hoc?

In GNS3 it is as simple and intuitive as choosing a node interface and connect it to whatever other node interface.

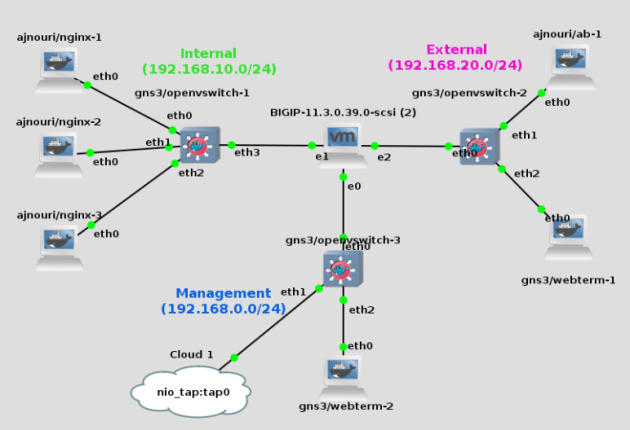

In this lab, we are testing basic F5 BIG-IP LTM VE deployment within GNS3. The only Virtual machine used in this lab is F5 BIG-IP all other devices are docker containers:

- Nginx Docker containers for internal web servers.

- ab(Apache Benchmark) docker container for the client used for performence testing.

- gns3/webterm containers used as Firefox browser for client testing and F5 web management.

Outline:

- Docker image import

- F5 Big-IP VE installation and activation

- Building the topology

- Setting F5 Big-IP interfaces

- Connectivity check between devices

- Load balancing configuration

- Generating client http queries

- Monitoring Load balancing

Devices used:

- gns3/openvswitch: OVS switch container.

- gns3/webterm: GUI browser container.

- ajnouri/nginx: nginx web server container.

- ajnouri/ab: Apache Benchmark container to generate client requests.

- F5 Big IP VE: F5 Load balancer Virtual Machine.

Environment:

- Debian host GNU/Linux 8.5 (jessie)

- GNS3 version 1.5.2 on Linux (64-bit)

System requirements:

- F5 Big IP VE requires 2GB of RAM (recommended >= 8GB)

- VT-x / AMD-V support

The only virtual machine used in the lab is F5 Big-IP, all other devices are Docker containers.

1.Docker image import

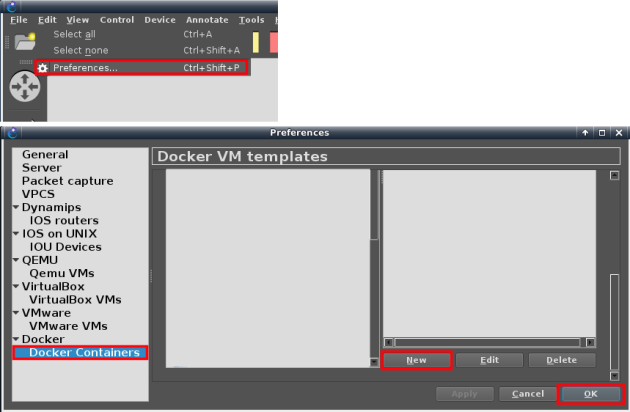

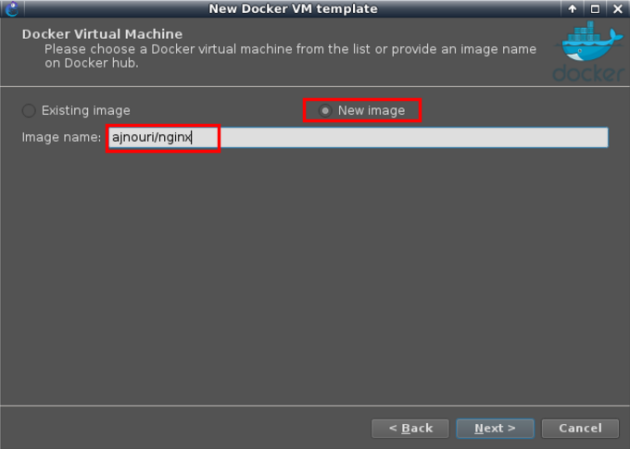

Create a new docker template in GNS3. Create new docker template: Edit > Preferences > Docker > Docker containers and then “New”.

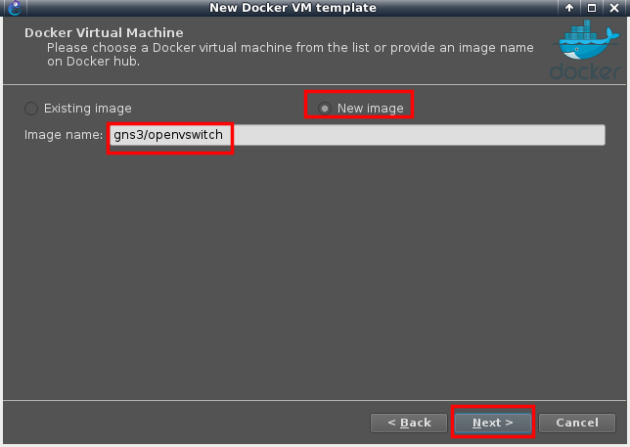

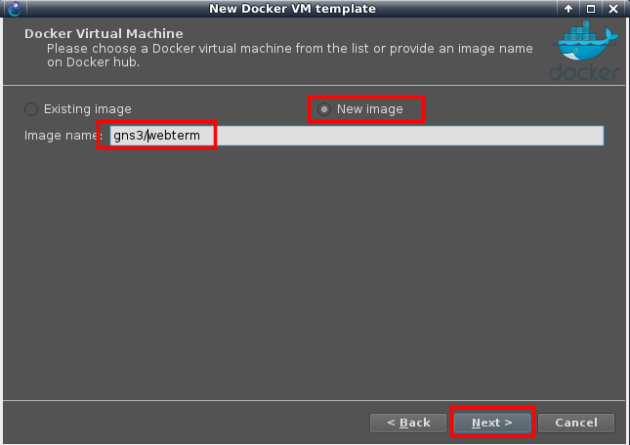

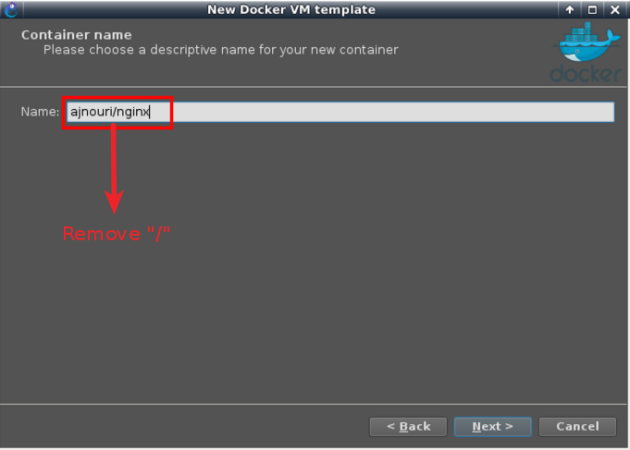

Choose “New image” option and type the Docker image in the format provided in “devices used” section <account/<repo>>, then choose a name (without the slash “/”).

Note:

By default GNS3 derives a name in the same format as the Docker registry (<account>/repository) which can cause an error in some earlier versions. In the latest GNS3 versions, the slash “/” is removed from the derived name.

Installing gns3/openvswitch:

Set the number of interfaces to eight and accept default parameters with “next” until “finish”.

– Repeat the same procedure for gns3/webterm

Choose a name for the image (without slash “/”)

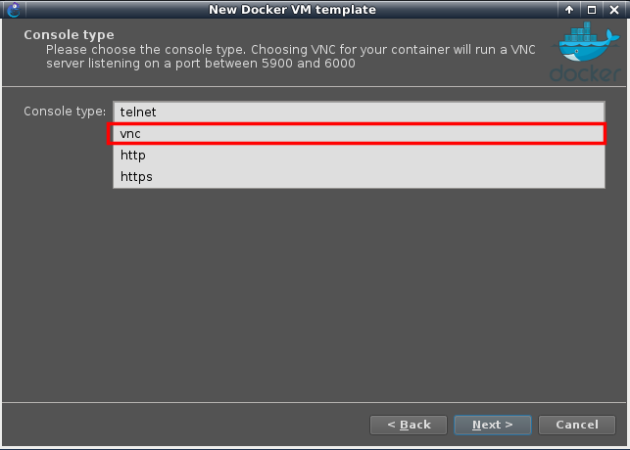

Choose vnc as the console type to allow Firefox (GUI) browsing

And keep the remaining default parameters.

– Repeat the same procedure for the image ajnouri/nginx.

Create a new image with name ajnouri/nginx

Name it as you like

And keep the remaining default parameters.

2. F5 Big-IP VE installation and activation

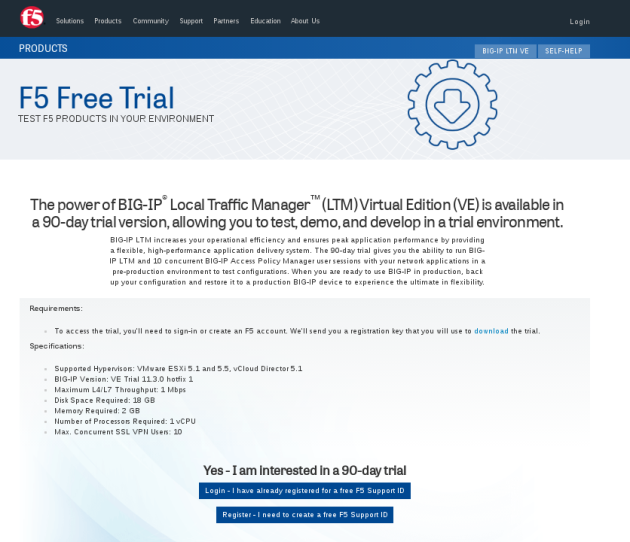

– From F5 site, import the file BIG-11.3.0.0-scsi.ova. Go to https://www.f5.com/trial/big-ip-ltm-virtual-edition.php

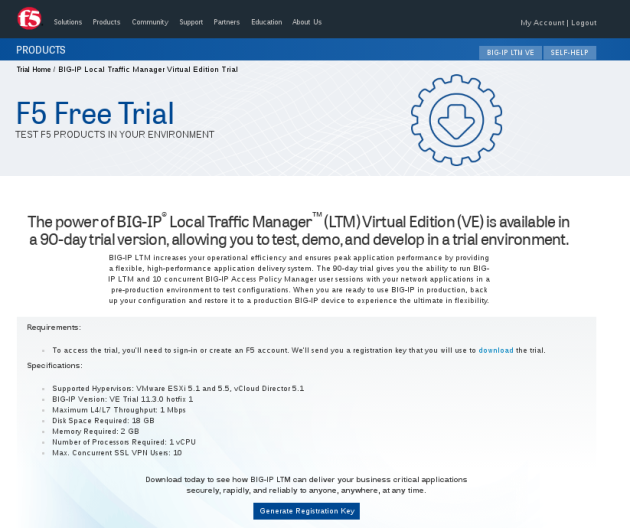

You’ll have to request a registration key for the trial version that you’ll receive by email.

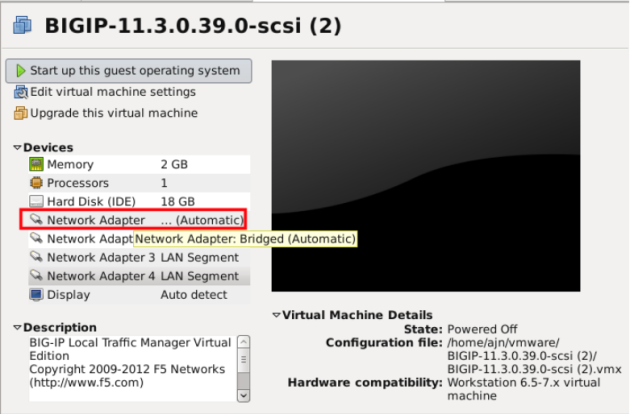

Open the ova file in VMWare workstation.

– To register the trial version, bridge the first interface to your network connected to Internet.

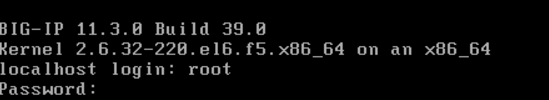

– Start the VM and log in to the console with root/default

– Type “config” to access the text user interface.

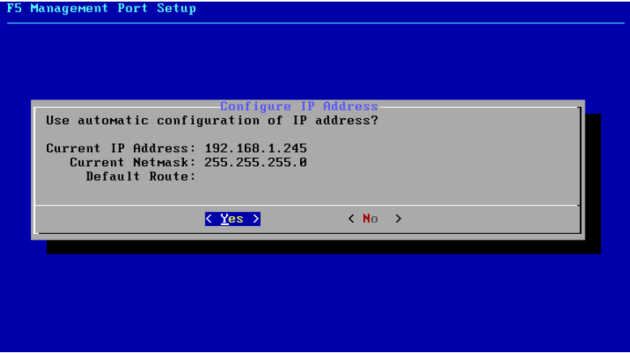

– Choose “No” to configure a static IP address, a mask and a default gateway in the same subnet of the bridged network. Or “Yes” if you have a dhcp server and want to get a dynamic IP.

– Choose “No” to configure a static IP address, a mask and a default gateway in the same subnet of the bridged network. Or “Yes” if you have a dhcp server and want to get a dynamic IP.

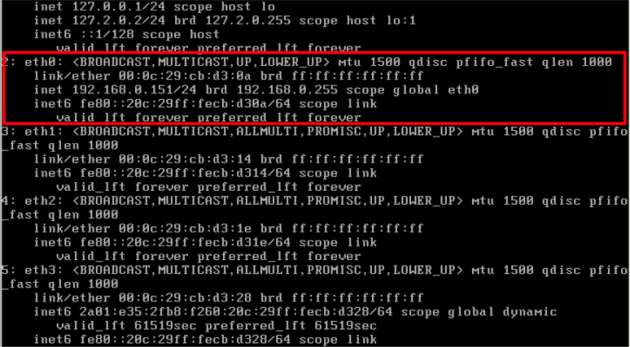

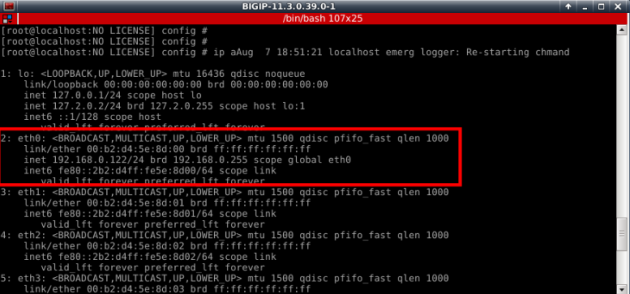

– Check the interface IP.

– Ping an Internet host ex: gns3.com to verify the connectivity and name resolution.

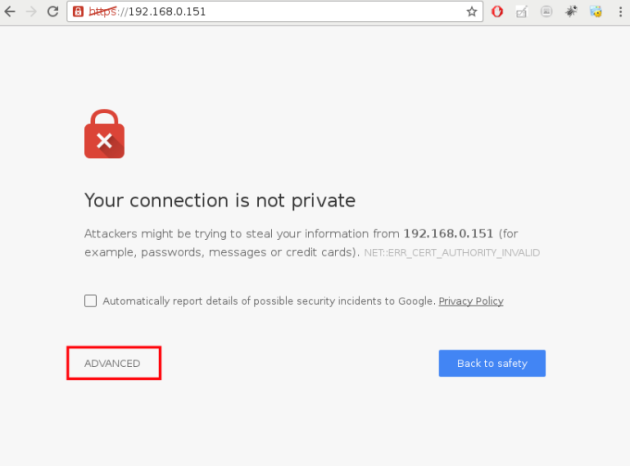

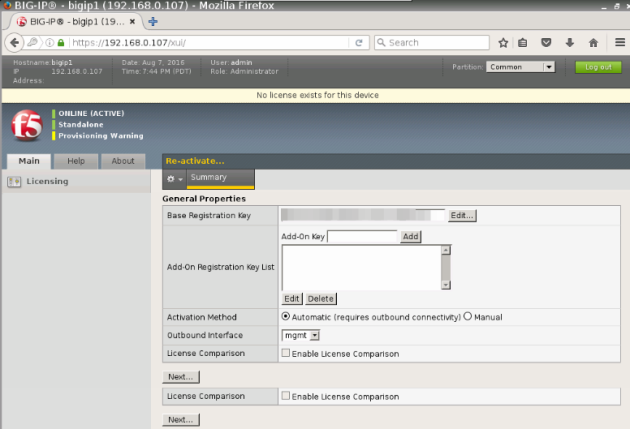

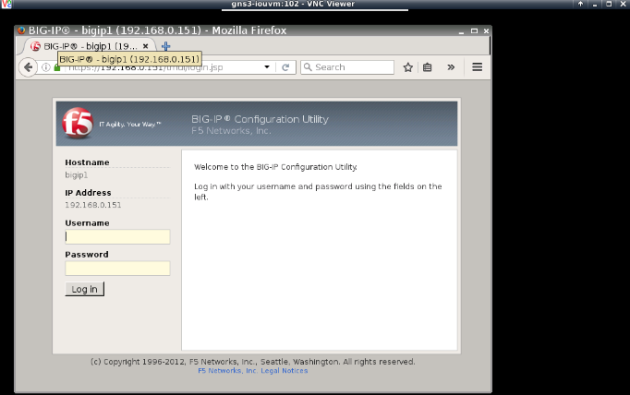

– Browse Big-IP interface IP and accept the certificate.

Use admin/admin for login credentials

Put the key you received by email in the Base Registration Key field and push “Next”, wait a couple of seconds for activation and you are good to go.

Now you can shutdown F5 VM.

3. Building the topology

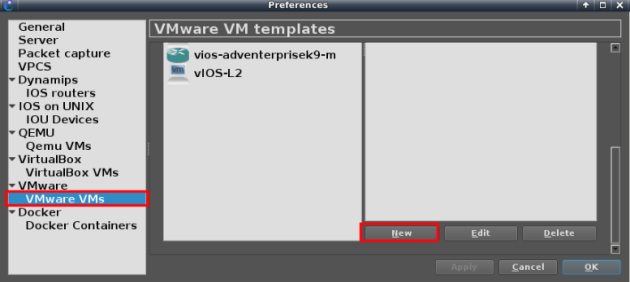

Importing F5 VE Virtual machine to GNS3

From GNS3 “preference” import a new VMWare virtual machine

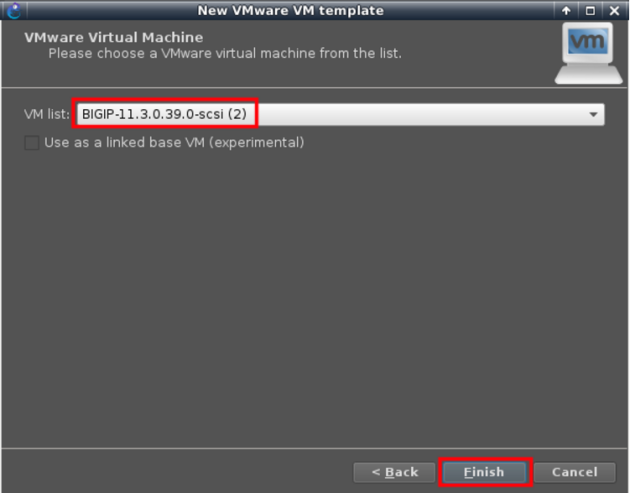

Choose BIG-IP VM we have just installed and activated

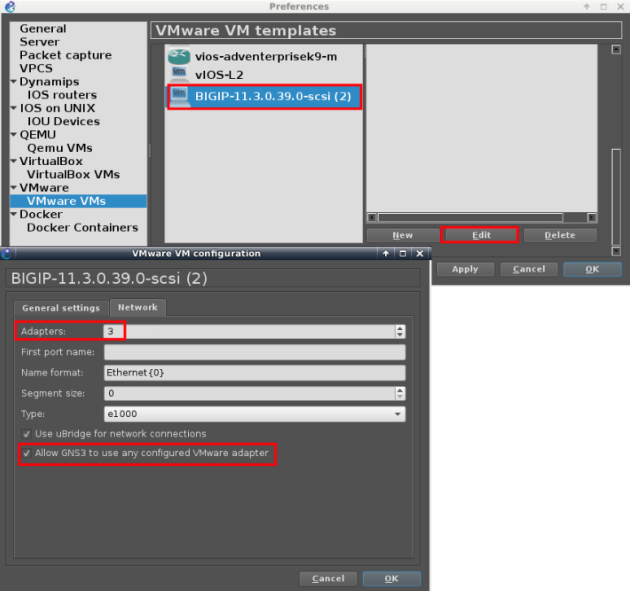

Make sure to set minimum 3 adapters and allow GNS3 to use any of the VM interfaces

Topology

Now we can build our topology using BIG-IP VM and the Docker images installed.

Below, is an example of topology in which we will import F5 VM and put some containers..

Internal:

– 3 nginx containers

– 1 Openvswitch

Management:

– GUI browser webterm container

– 1 Openvswitch

– Cloud mapped to host interface tap0

External:

– Apache Benchmark (ab) container

– GUI browser webterm container

Notice the BIG-IP VM interface e0, the one priorly bridged to host network, is now connected to a browser container for management.

| I attached the host interface “tap0” to the management switch because, for some reasons, without it, arp doesn’t work on that segment. |

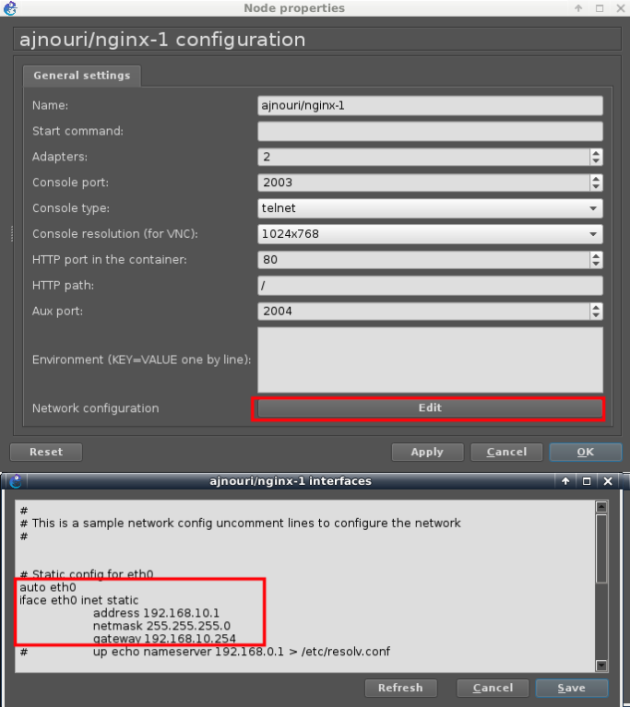

Address configuration:

– Assign each of the nginx container an IP in subnet of your choice (ex: 192.168.10.0/24)

In the same manner:

192.168.10.2/24 for ajnouri/nginx-2

192.168.10.3/24 for ajnouri/nginx-3

On all three nginx containers, start nginx and php servers:

service php5-fpm start service nginx start

– Assign an IP to the management browser in the same subnet as BIG-IP management IP

192.168.0.222/24 for gns3/webterm-2

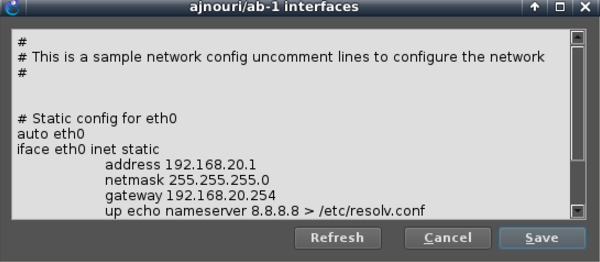

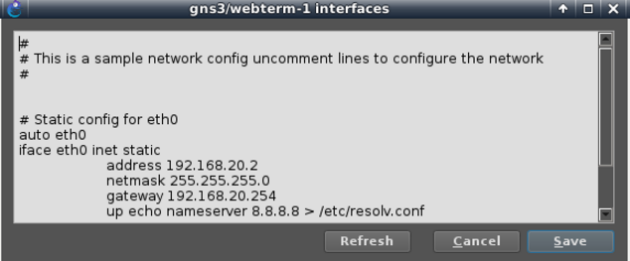

– Assign addresses default gateway and dns server to ab container and webterm-1 containers

And make sure both client devices resolve ajnouri.local host to BIG-IP address 192.168.20.254

echo "192.168.20.254 ajnouri.local" >> /etc/hosts

– Openvswitch containers don’t need to be configured, it acts like a single vlan.

– Start the topology

4. Setting F5 Big-IP interfaces

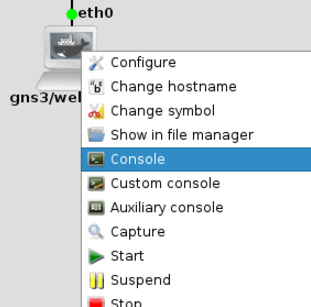

To manage the load balancer from the webterm-2, open the console to the container, this will open a Firefox from the container .

Browse the VM management IP https://192.168.0.151 and exception for the certificate and log in with F5 BigIP default credentials admin/admin.

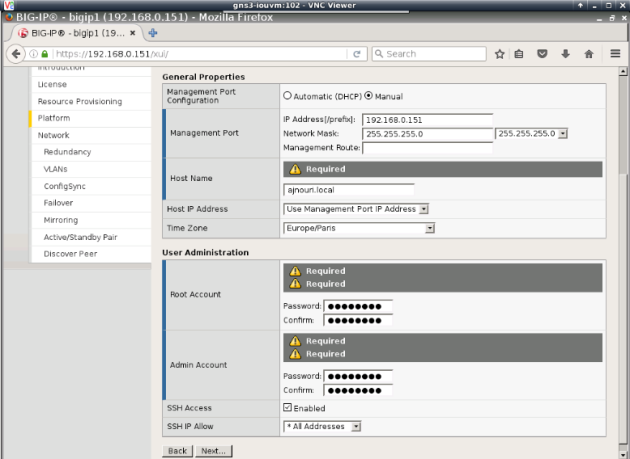

Go through the initial configuration steps

– You will have to set the hostname (ex: ajnouri.local), change the root and admin account passwords

You will be logged out to take into account password changes, log in back

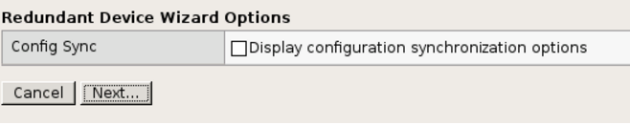

– For the purpose of this lab, not redundancy not high availability

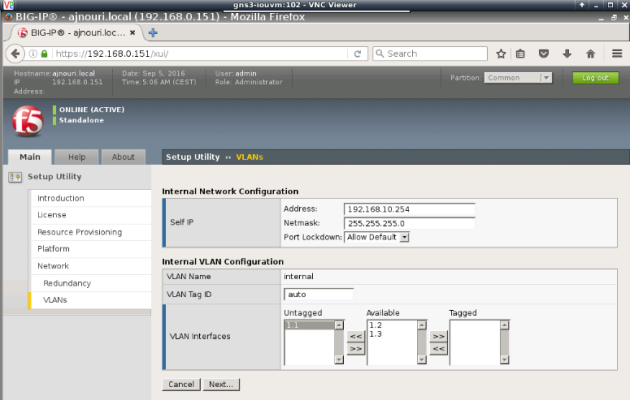

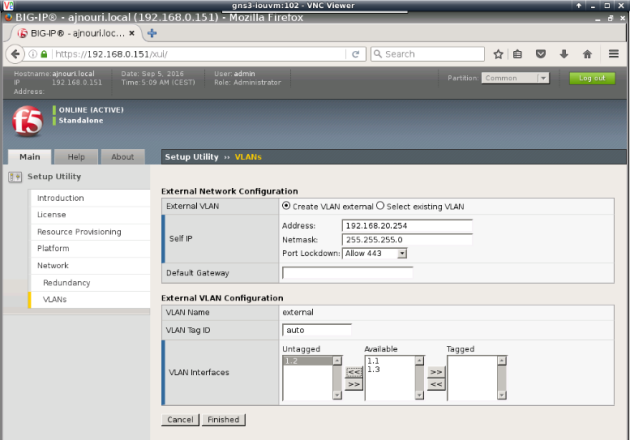

– Now you will have to configure internal (real servers) and external (client side) vlans and associated interfaces and self IPs.

(Self IPs are the equivalent of VLAN interface IP in Cisco switching)

Internal VLAN (connected to web servers):

External VLAN (facing clients):

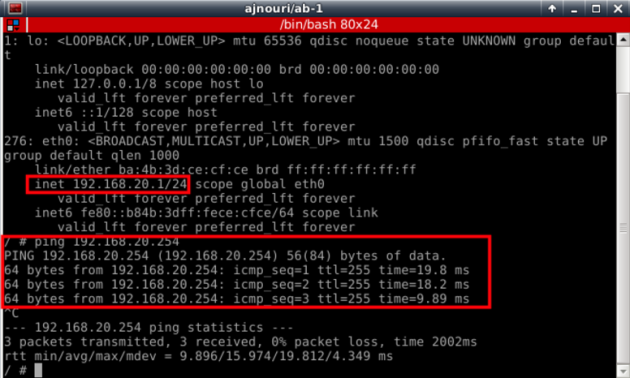

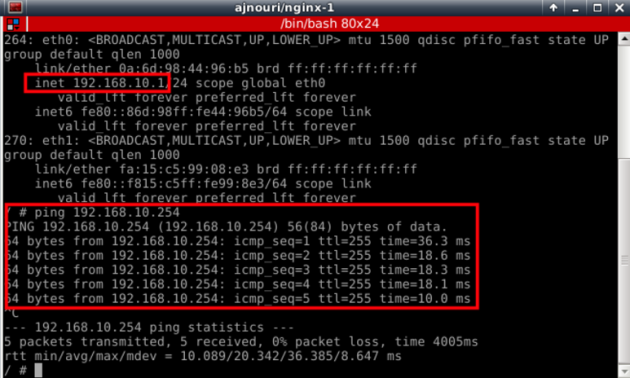

5. Connectivity check between devices

Now make sure you have successful connectivity from each container to the corresponding Big-IP interface.

Ex: from ab container

Ex: from nginx-1 server container

The interface connected to your host network will get ip parameters (address, gw and dns) from your dhcp server.

6. Load balancing configuration

Back to the browser webterm-2

For BIG-IP to load balance http requests from client to the servers, we need to configure:

- Virtual Server: single entity (virtual server) visible to client0

- Pool : associated to the Virtual server and contains the list of real web servers to balance between

- Algorithm used to load balance between members of the pool

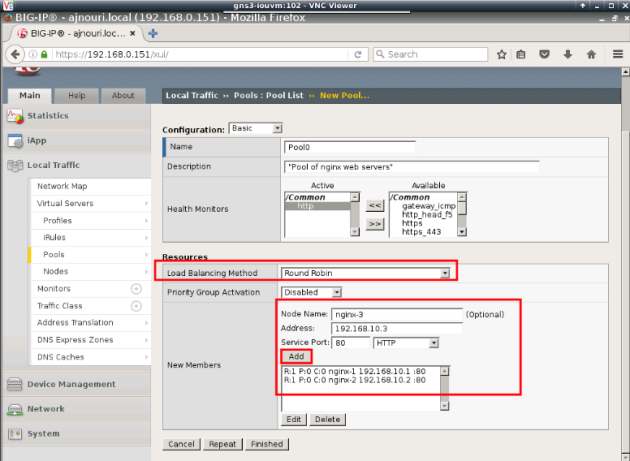

– Create a pool of web servers “Pool0” with “RoundRobin” as algorithm and http as protocol to monitor the members.

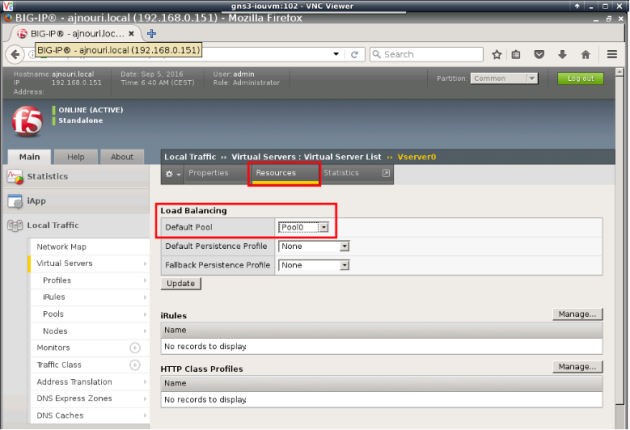

-Associate to the virtual server “VServer0” to the pool “Pool0”

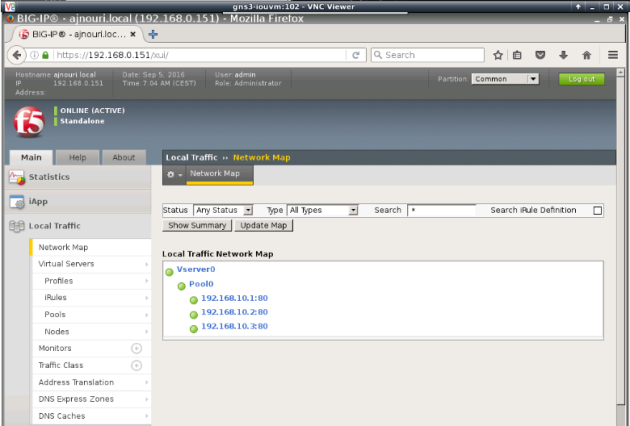

Check the network map to see if everything is configured correctly and monitoring shows everything OK (green)

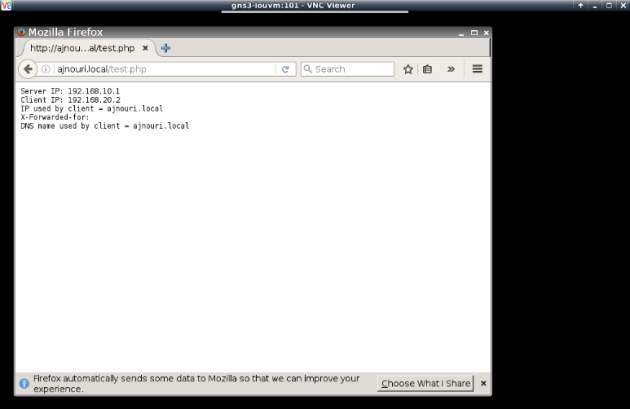

From client container webterm-1, you can start a firefox browser (console to the container) and test the server name “ajnouri/local”

If everything is ok, you’ll see the php page showing the real server ip used, the client ip and the dns name used by the client.

Everytime you refresh the page, you’ll see a different server IP used.

7. Performance testing

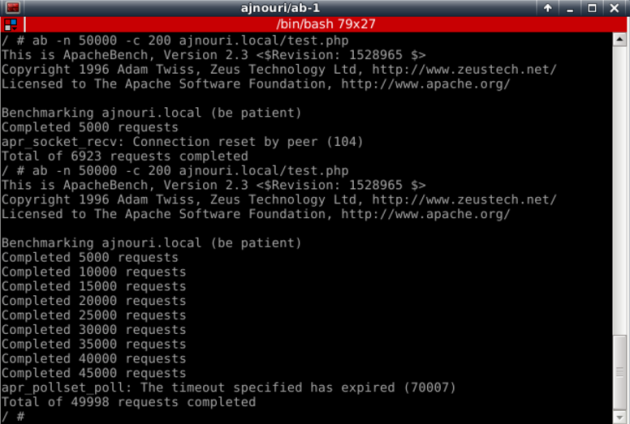

with Apache Benchmark container ajnouri/ab, we can generate client request to the load balancer virtual server by its hostname (ajnouri.local).

Let’s open an aux console to the container ajnouri/ab and generate 50.000 connections with 200 concurrent ones to the url ajnouri.local/test.php

ab -n 50000 -c 200 ajnouri.local/test.php

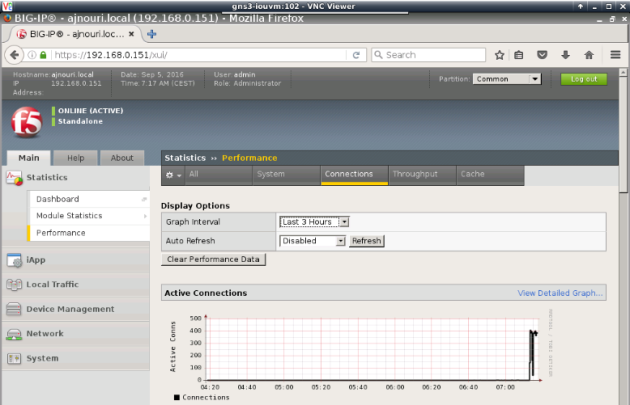

8. Monitoring load balancing

Monitoring the load balancer performance shows a peek of connections corresponding to Apache benchmark generated requests

In the upcoming part-2, the 3 web server containers are replaced with a single container in which we can spawn as many servers as we want (Docker-in-Docker) as well as test a custom python client script container that generates http traffic from spoofed IP addresses as opposed to a container (Apache Benchmark) that generate traffic from a single source IP.

Hi, this is one of the most well done and complete guides I’ve ever seen, in my long long experience 🙂 My compliments to you!!! With your very precious help, I installed the dockers on gns3 and connected f5 vm to it – Now I have a little problem.. when I add dockers on the gns3 scheme, on console it says “server error from http://192.168.1.12:3080: gns3/openswitch-1: Docker has returned an error: 500 invalid hostname format: gns3/openvswitch-1 – And, if I click on the appliance, gns3 says “node isn’t initialized” and cannot launch configuration or start it. Could you please help me? Thanks and still congratulations!!! 🙂

Hi Daniela,

Thanks for your encouragements and I am glad you found it useful.

To avoid this error, you’ll have to give a name to the container with no slash “/” in it.

By default GNS3 derives an image name from the format of the Docker registry format “account/repository”.

In the latests version of GNS3, the slash is automatically removed.

I updated the images with a note.

Thanks for your contribution.

Hi again! Thanks, I corrected the name and it worked 🙂 Now I’ve a little problem with f5 load balancing… I read your article for many times, but I didn’t find where you setted the php.test on f5 virtual appliance.. I think there was a missed step.. I didnt’ find where you configured the virtual server VServer0, there is no figure for it and so I did I by my own but I think I failed.. Could you please let me know how to enable php.test on f5 test so that I can reach it from ajnouri ab server and test connections?

Many thanks and still my compliments for all!!! :-)))

Daniela

Hi Daniela,

php.test is a file I added and pre-compiled with nginx server image, not on the load balancer.

As soon as you configure the virtual server, the pool and the algorithm on F5, it will handle all your requests to “the web server” and load balance them to the real backend servers (nginx containers, where the test.php file).

Remember, the client dosn’t talk and doesn’t know about the LB.

So, if you can already get back the page ajnouri.local (in my case) you only query the test.php page to get more informations about the query.

Thanks for your prompt reply 🙂 I tried again but something is wrong in my job.. 😦 If I try to send 50000 connections from ajnouri-ab-1 to ajnouri.local, I receive the following error:

“Benchmarking ainouri.local (be patient)

apr sockaddr_info_get() for ajnouri.local: Name or service not known (670002)

And if I open mozilla firefox from webterm-1 console and try to reach http://ajnouri.local/test.php it says ” Unable to connect – Firefox can’t establish a connection to the server ajnouri.local.”

I can ping ajnouri.local from webterm-1 and it correctly resolves to 192.168.20.254… but cannot reach test.php…

Could you please tell me if I need to configure something of particular on virtual server vserver0? I created the pool, looked in network map and all is ok, the 3 physical servers are green, the only wrong thing is that I cannot reach test.php.. 😦

Still many thanks for your help!

Daniela

When trying to browse http://ajnouri.local from webterm-1, can you capture traffic between F5 and Openvswitch-2 ?

I woulld like to know if web traffic leaves webterm-1, or maybe tcpdump from F5 console and see if web traffic is received by external vlan.

Hi, tried tcpdump from f5 console when I start a http connection from webterm-1, but nothing is shown on external vlan.. Maybe a wrong configuration of my f5? I used networks and ip’s on your guide, only changed the flat network using my lan (192.168.1.0) for management network..

If I look in f5 ifconfig I see:

eth0 inet address 192.168.1.6 (my home network)

external: 192.168.20.254

internal: 192.168.10.254

I used vmware workstation and the networks automatically assigned on it for f5 ve are:

host-only vmnet2 192.168.136.0

host-only vmnet5 10.128.1.0

host-only vmnet6 10.128.10.0

host-only vmnet7 10.128.20.0

I didn’t change anything here..

Don’t know which can be the problem.. :-((

Many thanks again,

Daniela

Hi, Its a good post, but some information is missing.

If we create a Virtual Server vServer0, there is an destination IP and type which is not shown.

Can you advise what should be the details for type and destination IP ?

Im getting these errors while starting ‘webterm’ , can you please check it?

Server error from http://192.168.218.128:3080: webterm-1: Please install Xvfb and x11vnc before using the VNC support

Server error from http://192.168.218.128:3080: webterm-1: Please install Xvfb and x11vnc before using the VNC support

Make sure xvfb and x11vnc are installed in GNS3 VM or in your host if it is a linux.

I’m running GNS3 1.5.3 with GNSVM 1.5 in VMWare Workstation 12.5.2 on Windows10.

Is there any command I can run to install/upgrade the GNS3VM for xvfb and x11vnc errors?

For everyone thinking about the missing Vserver0 step.

Local Traffic -> Virtual Servers -> Create

GENERAL PROPERTIES:

Name: Vserver0

Type: Standard

Source address: 192.168.20.0/24 (or 0.0.0.0/0 for everything)

Destination: 192.168.10.10 (This will be a Virtual Address which you will use to surf to from the client)

Service Port: 80 HTTP

CONFIGURATION:

Leave default settings with protocol TCP

CONTENT REWRITE/ACCELERATION:

Leave default

RESOURCES:

Default Pool: The node pool you created

Test from webterm-1 by surfing to 192.168.10.10/test.php (or whatever IP you set)

Press CTRL+F5 a couple of times to try it and see Server IP change on the webpage.

Hello Ajnouri,

Thank you for the good post.

Can you or somebody in this thread be kind enough to share how to install xvfb and x11vnc in GNS3 VM ( I am using Windows 10 + VM Workstation 12.5.7)? Thanks!

Never mind.. Figured it out 🙂

Well done Victor 🙂

Getting following error while realizing the above lab on GNS3 (v 2.1.4); vmware workstation (14.1.1 build-7528167)

bridge ‘ethernet0.vnet’ already exist: uBridge version 0.9.13 running with WinPcap version 4.1.3 (packet.dll version 4.1.0.2980), based on libpcap version 1.0 branch 1_0_rel0b (20091008)

Hypervisor TCP control server started (IP 127.0.0.1 port 50227).

nio_ethernet_open: unable to open device ‘\Device\NPF_{1FF91FFE-2C5F-49F4-B908-6FC6A7C8A022}’: Error opening adapter: The system cannot find the device specified. (20)

Please Help

issue resolved by upgrading Wireshark (hit and trail). but on NGINX seems unresponsive with following on logs (console)

nginx-1 console is now available… Press RETURN to get started.

*** Running /etc/my_init.d/00_regen_ssh_host_keys.sh…

*** Running /etc/rc.local…

*** Booting runit daemon…

*** Runit started as PID 50

tail: unrecognized file system type 0x794c7630 for â/var/log/syslogâ. please report this to bug-coreutils@gnu. org. reverting to polling

[11-Apr-2018 13:18:36] NOTICE: fpm is running, pid 59

[11-Apr-2018 13:18:36] NOTICE: ready to handle connections

[11-Apr-2018 13:18:36] NOTICE: systemd monitor interval set to 10000ms

Apr 11 13:18:36 nginx-1 syslog-ng[61]: syslog-ng starting up; version=’3.5.3′

Hello, nice tutorial, could you maybe make the exact same thing in GNS3, but with the appliance in the marketplace from GNS3. I mean do the same thing but without Vmware. I tried but I have no connectivity. I will place also a port on GNS3 help forum this evening.

Thank you!

I’ve manage to have connectivity in gns3 without the vm, only with the appliance. Thank you anyway!

ajnouri , thanks for the wonderful tutorial. Where can I find the part 2?

amazing tutorial thanks!